- This event has passed.

REGISTER HERE

Numerous AI documentation processes and practices have been developed in recent years, with goals including improving transparency, safety, fairness, and accountability for the development and uses of AI systems. Well known AI documentation standards include Google’s Model Cards, Microsoft’s Datasheets for Datasets, IBM’s FactSheets, and more recently Meta’s System Cards. Notably, individual companies have led much of this work, begging the question of whether such practices could or should be standardized more broadly. Multi-stakeholder efforts, such as the Partnership on AI’s ABOUT ML initiative, and academic efforts, such as the recent proposal for Reward Reports for Reinforcement Learning, offer important alternative insights.

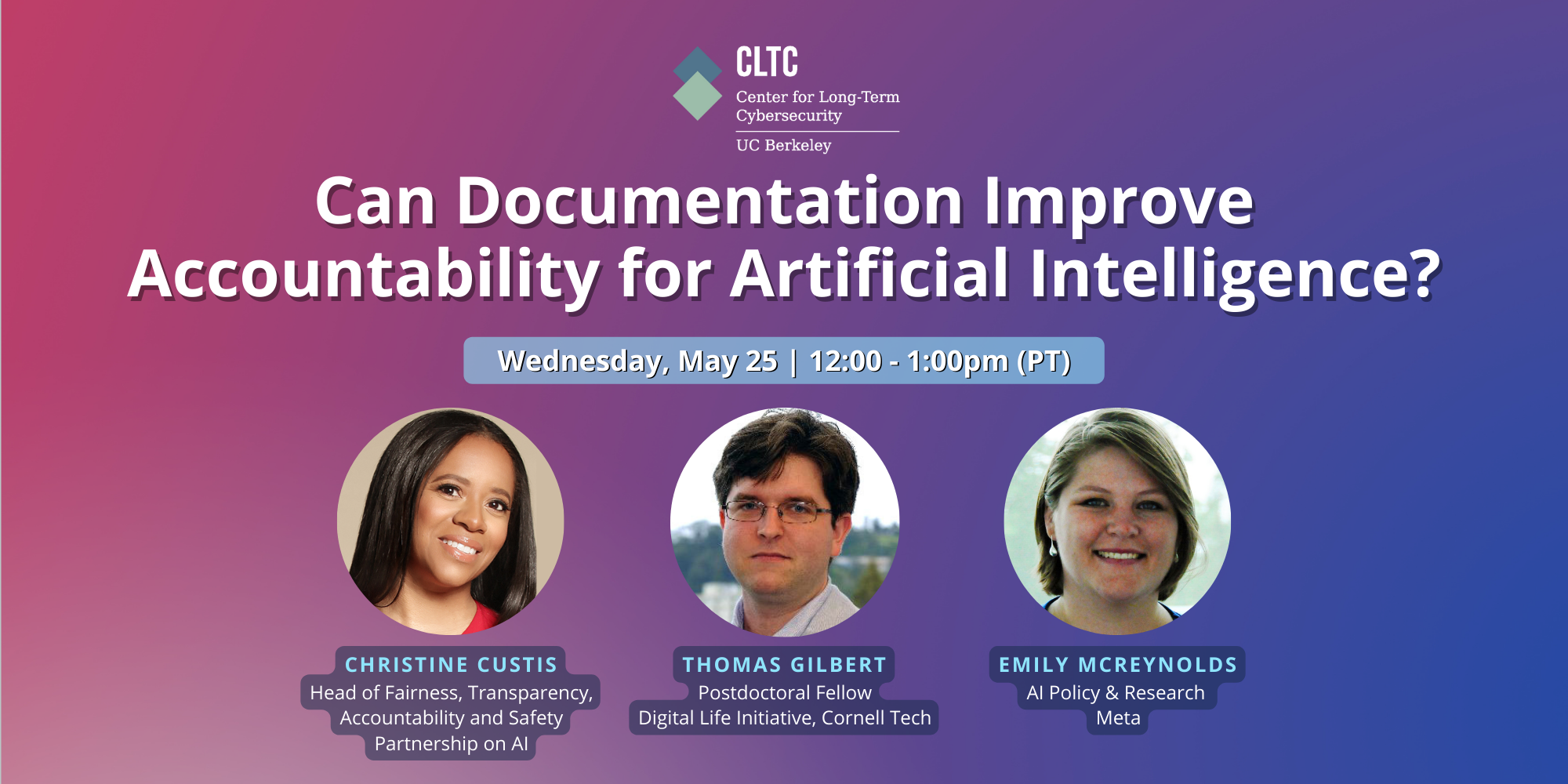

Join the UC Berkeley AI Security Initiative, hosted by the Center for Long-Term Cybersecurity, to learn about the current state of AI documentation, how far the AI community has come in adopting these practices, and new ideas to support trustworthy AI well into the future.

—–

Accessibility Accommodations

If you require an accommodation for effective communication (ASL interpreting, CART captioning, alternative media formats, etc.) or information about mobility access in order to fully participate in this event, please contact Rachel Wesen at cltcevents@berkeley.edu with as much advance notice as possible and at least 7–10 days in advance of the event.